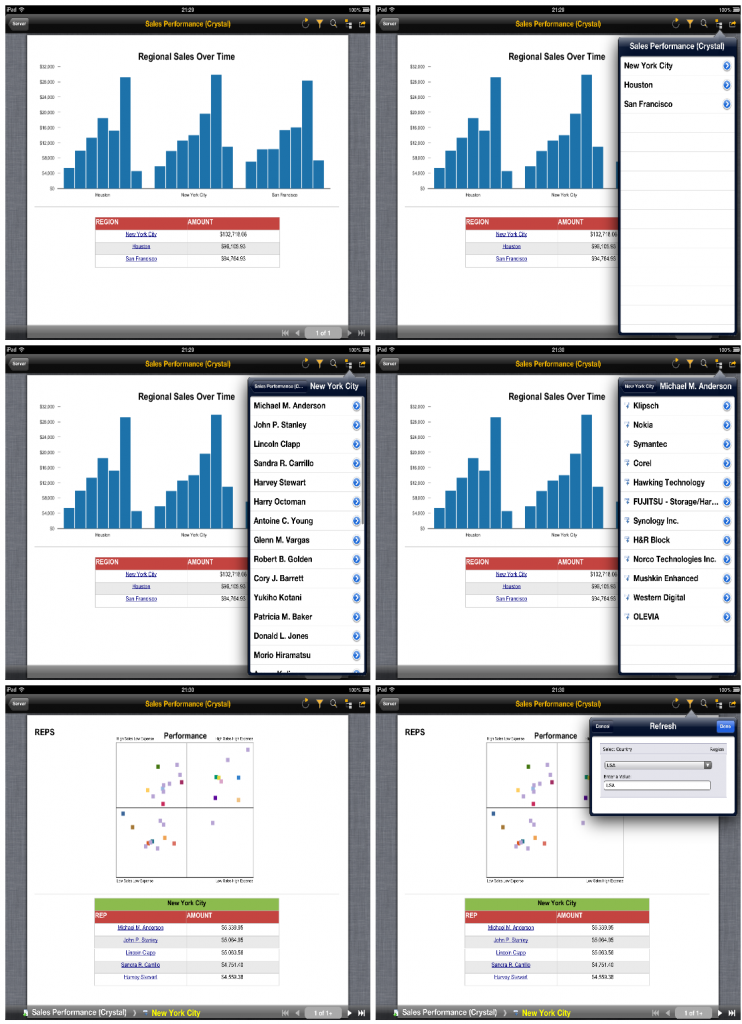

I recently received several questions about connecting Tableau to SAP BI (Business Objects) as a data source. Tableau already has direct connectivity to HANA, however many users out there are looking for ways to connect their existing webi (web intelligence) reports to Tableau. So, I set to look for some solutions to this problem and was able to develop two good solutions that are automated and allow users to leverage the investment they already made in setting up webi reports through Tableau in an automated fashion.

I started out by examining the data connections available in Tableau and looking for possible candidates I can use to connect to SAP BI and webi. Two options caught my attention immediately as viable connectivity options, since they are relatively open: the “Other Databases (ODBC)” connection and the “OData” connection.

My first course of investigation was around the ODBC option. I know that Crystal Reports for example has an ODBC driver that can connect to an XML feed. So, in theory, I should be able to create an XML feed off a BI service published from a webi report, and connect using this driver.

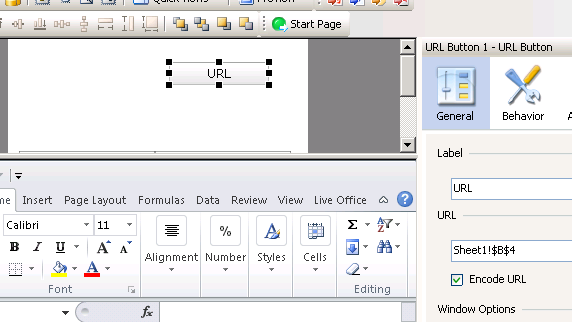

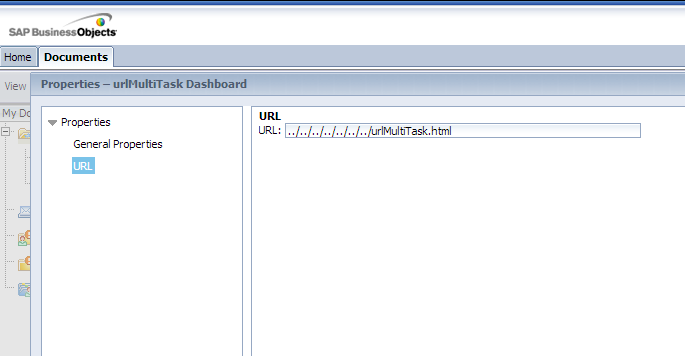

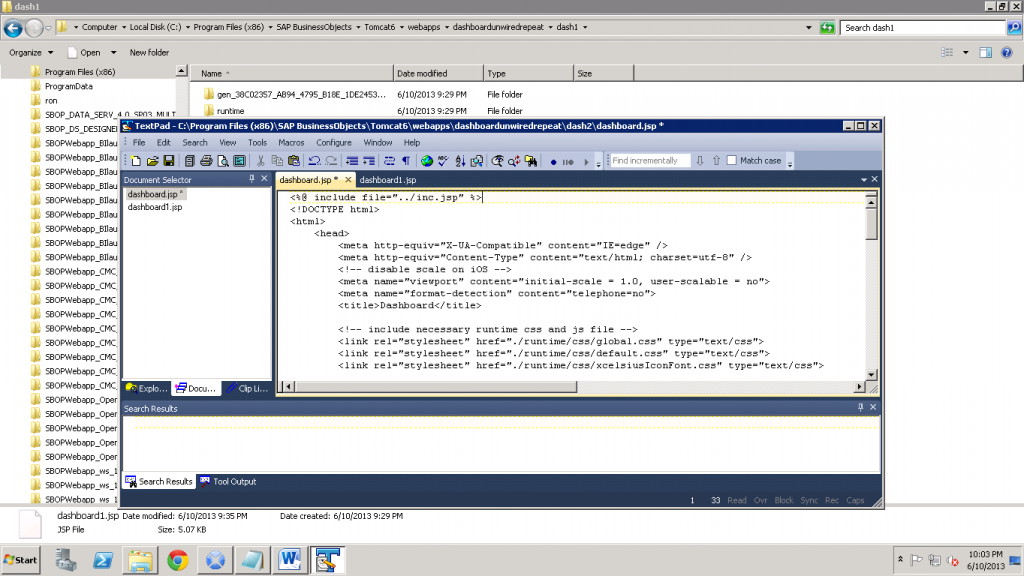

Trying to use the Crystal Reports driver was a bust. Technically, this would have worked, but the driver, produced by DataDirect is licensed for use with Crystal only and trying to use it with Tableau (or any other client) produce an error message stating the driver can only be used for Crystal. If you happen to have access to a DataDirect or other XML ODBC driver, you can convert the BI Service SOAP response into an XML feed (see jsp code below), and use that to build a DSN you can connect Tableau to. In my searches, I was only able to find commercially licensed XML ODBC drivers, so my second approach, writing my own OData producer became more relevant.

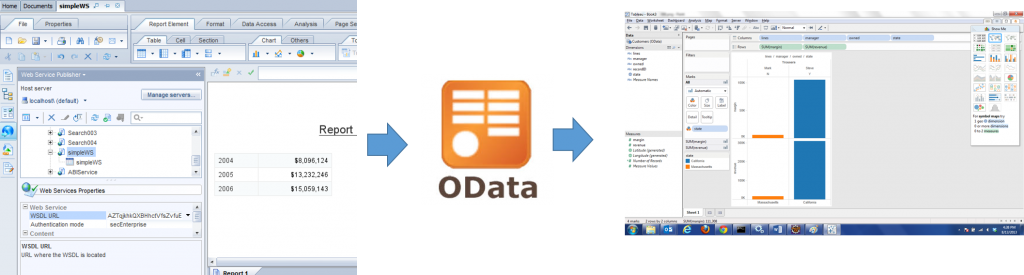

OData is a relatively new, but very popular, internet data exchange protocol and it defines ways to send and request information from a service. There are several implementations for it, and I ended up choosing the java odata4j framework for my experiment.

I started out by setting up the odata4j project in Eclipse and getting two examples I was interested in working: the XmlDataProducerExample which describes how to read an XML feed and expose it as an OData producer, and the ExampleProducerFactory example which demonstrates how to expose the OData producer in Tomcat. I ended up using the Jersey based XML example in my working prototype, but I would most certainly look to host this in Tomcat directly in a real world situation.

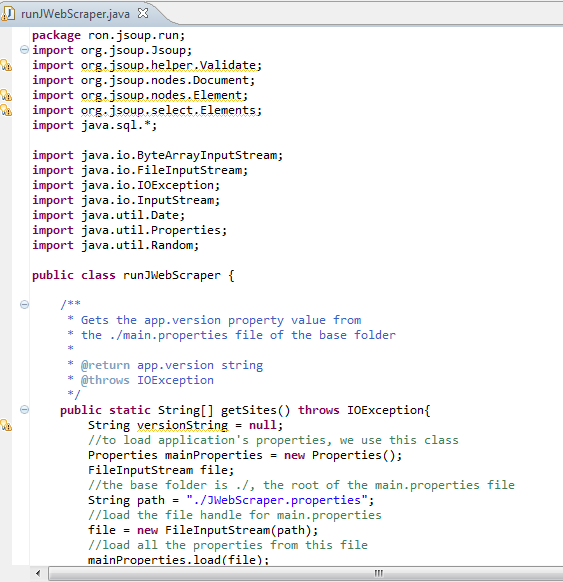

So, using the odata4j examples, I created a java application and added a class to read my own XML feed instead of the provided one. I also removed the portions of the OData framework that would allow users to interact with the feed in terms of making changes to the data (not needed in our scenario, which is reporting only). So, my main class connecting the XML feed looks like so:

package org.odata4j.examples.producer;

import static org.odata4j.examples.JaxRsImplementation.JERSEY;

import java.io.BufferedInputStream;

import java.io.IOException;

import java.io.InputStream;

import java.net.MalformedURLException;

import java.net.URL;

import java.net.URLConnection;

import java.util.ArrayList;

import java.util.List;

import java.util.Map;

import javax.xml.bind.annotation.XmlElement;

import javax.xml.bind.annotation.XmlRootElement;

import javax.xml.stream.XMLEventReader;

import javax.xml.stream.XMLInputFactory;

import javax.xml.stream.XMLStreamException;

import javax.xml.stream.events.XMLEvent;

import org.odata4j.core.OEntities;

import org.odata4j.core.OEntity;

import org.odata4j.core.OEntityId;

import org.odata4j.core.OEntityKey;

import org.odata4j.core.OExtension;

import org.odata4j.core.OFunctionParameter;

import org.odata4j.core.OProperties;

import org.odata4j.core.OProperty;

import org.odata4j.core.Throwables;

import org.odata4j.edm.EdmDataServices;

import org.odata4j.edm.EdmEntityContainer;

import org.odata4j.edm.EdmEntitySet;

import org.odata4j.edm.EdmEntityType;

import org.odata4j.edm.EdmFunctionImport;

import org.odata4j.edm.EdmProperty;

import org.odata4j.edm.EdmSchema;

import org.odata4j.edm.EdmSimpleType;

import org.odata4j.examples.AbstractExample;

import org.odata4j.examples.ODataServerFactory;

import org.odata4j.examples.producer.jpa.northwind.Customers;

import org.odata4j.exceptions.NotImplementedException;

import org.odata4j.producer.BaseResponse;

import org.odata4j.producer.CountResponse;

import org.odata4j.producer.EntitiesResponse;

import org.odata4j.producer.EntityIdResponse;

import org.odata4j.producer.EntityQueryInfo;

import org.odata4j.producer.EntityResponse;

import org.odata4j.producer.ODataProducer;

import org.odata4j.producer.QueryInfo;

import org.odata4j.producer.Responses;

import org.odata4j.producer.edm.MetadataProducer;

import org.odata4j.producer.resources.DefaultODataProducerProvider;

/**

* This example shows how to expose xml data as an atom feed.

*/

public class XmlDataProducerExampleRon2 extends AbstractExample {

public static final String endpointUri = "http://localhost:8010/XmlDataProducerExampleRon2.svc";

public static void main(String[] args) {

XmlDataProducerExampleRon2 example = new XmlDataProducerExampleRon2();

example.run(args);

}

private void run(String[] args) {

System.out.println("Please direct your browser to " + endpointUri + "/Customers");

// register the producer as the static instance, then launch the http server

DefaultODataProducerProvider.setInstance(new XmlDataProducer());

new ODataServerFactory(JERSEY).hostODataServer(endpointUri);

}

@XmlRootElement

public class CustomersList {

@XmlElement

Customers[] customers;

}

/**

* Sample ODataProducer for providing xml data as an atom feed.

*/

public class XmlDataProducer implements ODataProducer {

private final EdmDataServices metadata;

private XMLInputFactory xmlInputFactory;

public XmlDataProducer() {

// build the metadata here hardcoded as example

// one would probably generate it from xsd schema or something else

String namespace = "XmlExample";

Listproperties = new ArrayList();

properties.add(EdmProperty.newBuilder("state").setType(EdmSimpleType.STRING));

properties.add(EdmProperty.newBuilder("lines").setType(EdmSimpleType.STRING));

properties.add(EdmProperty.newBuilder("manager").setType(EdmSimpleType.STRING));

properties.add(EdmProperty.newBuilder("owned").setType(EdmSimpleType.STRING));

properties.add(EdmProperty.newBuilder("revenue").setType(EdmSimpleType.DOUBLE));

properties.add(EdmProperty.newBuilder("margin").setType(EdmSimpleType.DOUBLE));

properties.add(EdmProperty.newBuilder("recordID").setType(EdmSimpleType.DOUBLE));

ListentityTypes = new ArrayList();

EdmEntityType.Builder type = EdmEntityType.newBuilder().setNamespace(namespace).setName("Customers").addKeys("recordID").addProperties(properties);

entityTypes.add(type);

ListentitySets = new ArrayList();

entitySets.add(EdmEntitySet.newBuilder().setName("Customers").setEntityType(type));

EdmEntityContainer.Builder container = EdmEntityContainer.newBuilder().setName(namespace + "Entities").setIsDefault(true).addEntitySets(entitySets);

EdmSchema.Builder modelSchema = EdmSchema.newBuilder().setNamespace(namespace + "Model").addEntityTypes(entityTypes);

EdmSchema.Builder containerSchema = EdmSchema.newBuilder().setNamespace(namespace + "Container").addEntityContainers(container);

metadata = EdmDataServices.newBuilder().addSchemas(containerSchema, modelSchema).build();

xmlInputFactory = XMLInputFactory.newInstance();

}

@Override

public EdmDataServices getMetadata() {

return this.metadata;

}

/**

* Returns OEntities build from xml data. In the real world the xml data

* could be filtered using the provided queryInfo.filter.

* The real implementation should also respect

* queryInfo.top and queryInfo.skip.

*/

@Override

public EntitiesResponse getEntities(String entitySetName, QueryInfo queryInfo) {

EdmEntitySet ees = getMetadata().getEdmEntitySet(entitySetName);

URL url =null;

URLConnection urlConnection = null;

InputStream is=null;

try {

url = new URL("http://myserver.com/mycontext/getSource.jsp");

urlConnection = url.openConnection();

is = new BufferedInputStream(urlConnection.getInputStream());

} catch (MalformedURLException e) {

// TODO Auto-generated catch block

e.printStackTrace();

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

XMLEventReader reader = null;

try {

// transform the xml to OEntities with OProperties.

// links are omitted for simplicity

reader = xmlInputFactory.createXMLEventReader(is);

Listentities = new ArrayList();

List> properties = new ArrayList>();

boolean inCustomer = false;

String id = null;

String data = null;

while (reader.hasNext()) {

XMLEvent event = reader.nextEvent();

if (event.isStartElement()) {

if ("customers".equals(event.asStartElement().getName().getLocalPart())) {

inCustomer = true;

}

} else if (event.isEndElement()) {

String name = event.asEndElement().getName().getLocalPart();

if ("customers".equals(name)) {

entities.add(OEntities.create(ees, OEntityKey.create(id), properties, null));

properties = new ArrayList>();

inCustomer = false;

} else if (inCustomer) {

if ("recordID".equals(name)) {

id = data;

}

properties.add(OProperties.string(name, data));

}

} else if (event.isCharacters()) {

data = event.asCharacters().getData();

}

}

return Responses.entities(entities, ees, null, null);

} catch (XMLStreamException ex) {

throw Throwables.propagate(ex);

} finally {

try {

if (reader != null) reader.close();

} catch (XMLStreamException ignore) {}

try {

is.close();

} catch (IOException ignore) {}

}

}

@Override

public CountResponse getEntitiesCount(String entitySetName, QueryInfo queryInfo) {

throw new NotImplementedException();

}

@Override

public EntitiesResponse getNavProperty(String entitySetName, OEntityKey entityKey, String navProp, QueryInfo queryInfo) {

throw new NotImplementedException();

}

@Override

public CountResponse getNavPropertyCount(String entitySetName, OEntityKey entityKey, String navProp, QueryInfo queryInfo) {

throw new NotImplementedException();

}

@Override

public void close() {}

@Override

public EntityResponse createEntity(String entitySetName, OEntity entity) {

throw new NotImplementedException();

}

@Override

public EntityResponse createEntity(String entitySetName, OEntityKey entityKey, String navProp, OEntity entity) {

throw new NotImplementedException();

}

@Override

public void deleteEntity(String entitySetName, OEntityKey entityKey) {

throw new NotImplementedException();

}

@Override

public void mergeEntity(String entitySetName, OEntity entity) {

throw new NotImplementedException();

}

@Override

public void updateEntity(String entitySetName, OEntity entity) {

throw new NotImplementedException();

}

@Override

public EntityResponse getEntity(String entitySetName, OEntityKey entityKey, EntityQueryInfo queryInfo) {

throw new NotImplementedException();

}

@Override

public EntityIdResponse getLinks(OEntityId sourceEntity, String targetNavProp) {

throw new NotImplementedException();

}

@Override

public void createLink(OEntityId sourceEntity, String targetNavProp, OEntityId targetEntity) {

throw new NotImplementedException();

}

@Override

public void updateLink(OEntityId sourceEntity, String targetNavProp, OEntityKey oldTargetEntityKey, OEntityId newTargetEntity) {

throw new NotImplementedException();

}

@Override

public void deleteLink(OEntityId sourceEntity, String targetNavProp, OEntityKey targetEntityKey) {

throw new NotImplementedException();

}

@Override

public BaseResponse callFunction(EdmFunctionImport name, Map params, QueryInfo queryInfo) {

throw new NotImplementedException();

}

@Override

public MetadataProducer getMetadataProducer() {

return null;

}

@Override

public > TExtension findExtension(Class clazz) {

return null;

}

}

}

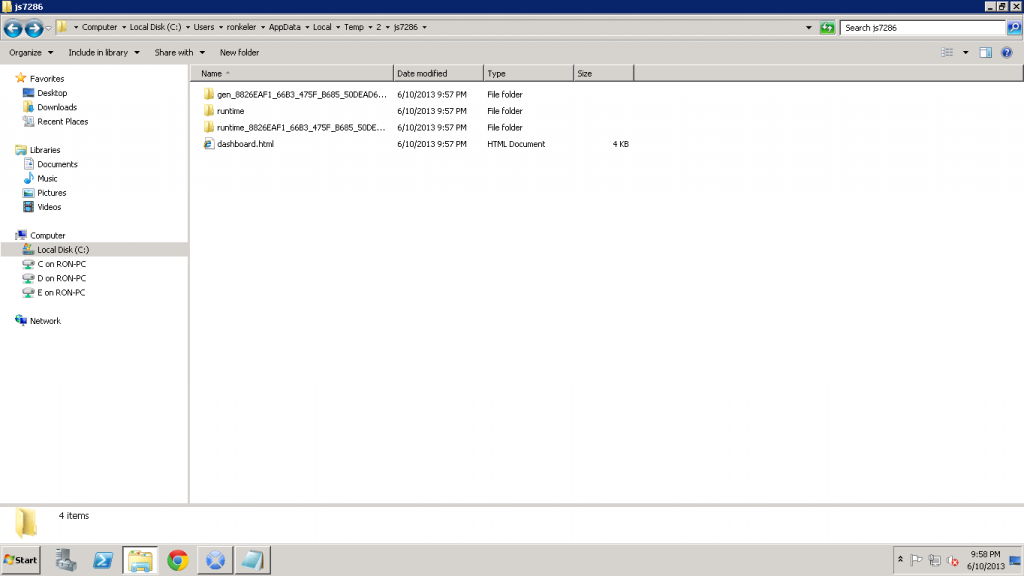

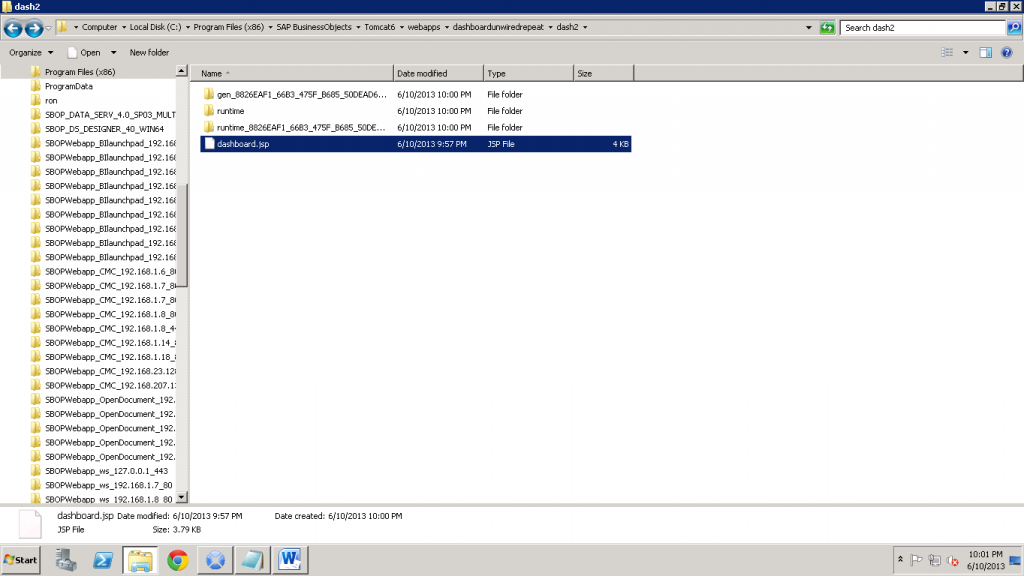

Notice the line url = new URL(“http://myserver.com/mycontext/getSource.jsp”);

This is the .jsp (can be a servlet, or our Bogoboards Gateway) that converts a BI Service into a simple XML feed. This .jsp looks like so:

<%@ page language="java" contentType="text/xml; charset=UTF-8" pageEncoding="UTF-8"%>

<%@ page import="com.crystaldecisions.sdk.framework.IEnterpriseSession,

com.crystaldecisions.sdk.framework.CrystalEnterprise,

com.crystaldecisions.sdk.exception.SDKException, com.crystaldecisions.sdk.framework.ISessionMgr,

com.crystaldecisions.sdk.occa.security.ILogonTokenMgr, com.businessobjects.bcm.*,tableau_source_rk_pkg.*,

javax.xml.rpc.Service,java.net.URL, java.util.*, java.text.NumberFormat,

java.math.RoundingMode" %>

<%@ page trimDirectiveWhitespaces="true"%>

<%

try{

//in this example, hard coded BO account, in real life will integrate auth or obtain from form

String username = "myusername";

String password = "mypwd";

String token = "";

//Authenticate user and get BO session

IEnterpriseSession enterpriseSession = CrystalEnterprise.getSessionMgr().logon(username, password, "cmsname:6400", "secEnterprise");

String serSess = enterpriseSession.getSerializedSession();

ILogonTokenMgr tokenMgr = enterpriseSession.getLogonTokenMgr();

token = tokenMgr.getDefaultToken();

session.setAttribute("serSess",serSess);

session.setAttribute("EnterpriseSession",enterpriseSession);

session.setAttribute("token",token);

}

catch(Exception e){

System.out.println(new java.util.Date()+": error on .jsp: "+e);

response.sendRedirect("out.html");

}

%>

<%

String str = "";

String rowStr = null;

NumberFormat formatter = NumberFormat.getCurrencyInstance(java.util.Locale.US);

formatter.setRoundingMode(RoundingMode.HALF_EVEN);

formatter.setMaximumFractionDigits(0);

URL endpoint = new URL("http://server.com/dswsbobje/qaawsservices/queryasaservice/biws?cuid=SOMEBISERVICECUID&authType=secEnterprise&locale=en_US&timeout=60&ConvertAnyType=true");

BIServicesSoapStub stub = new BIServicesSoapStub(endpoint,null);

try{

GetReportBlock_tableau_source_rk parameters = newGetReportBlock_tableau_source_rk();

parameters.setGetFromLatestDocumentInstance(true);

//In this example, reading report cache data, can set setRefresh to true to hit the DB each time

//parameters.setRefresh(true);

parameters.setResetState(true);

QaaWSHeader request_header = new QaaWSHeader();

request_header.setSerializedSession((String)session.getAttribute("serSess"));

//Call the soap service and get the response

GetReportBlock_tableau_source_rkResponse res = stub.getReportBlock_tableau_source_rk(parameters, request_header);

//Iterate through the response and parse it out to xml format

//In this example, hard coded parsing, can use the header to parse out dynamically

java.lang.Object[][] table = res.getTable();

for (int i=0;i<table.length;i++) {

for (int x=0;x<table[i].length;x++) {

if(x==table[i].length-1) {

rowStr = rowStr+"<customers><state>"+(String)table[i][1]+"</state><lines>"+

(String)table[i][2]+"</lines><manager>"+(String)table[i][3]+"</manager><owned>"+(String)table[i][4]+"</owned><revenue>"+

(Double)table[i][5]+"</revenue><margin>"+(Double)table[i][6]+"</margin><recordID>"+(Double) table[i][0]+"</recordID></customers>";}

}

}

}

catch(Exception e) {

System.out.println(new java.util.Date()+": Error in get and display data: " + e);

}

str = "<?xml version='1.0' encoding='utf-8'?><customersList>"+

rowStr+

"</customersList>";

out.println(str); //output the xml

%>

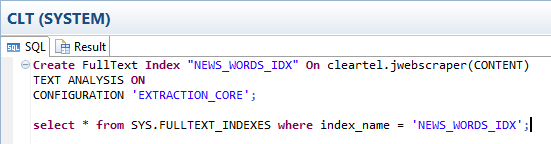

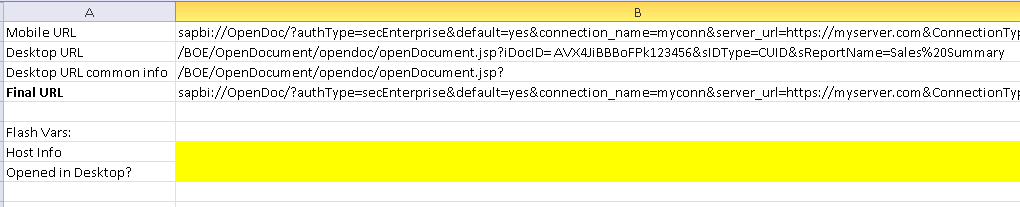

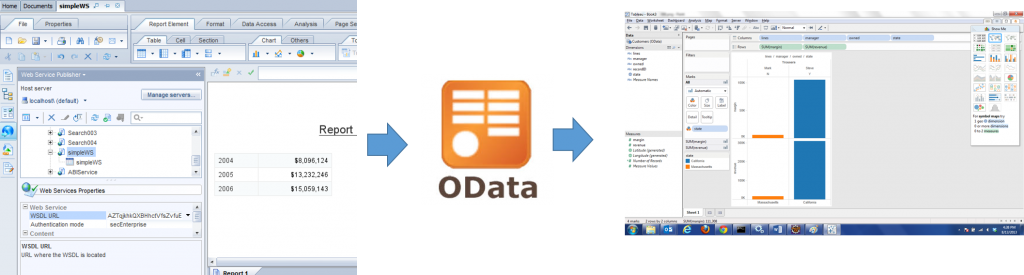

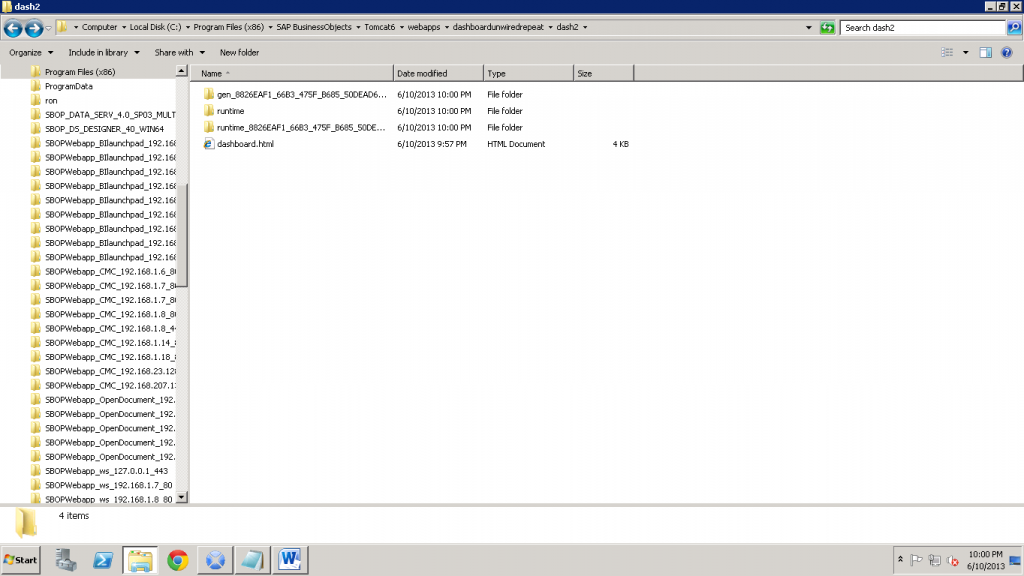

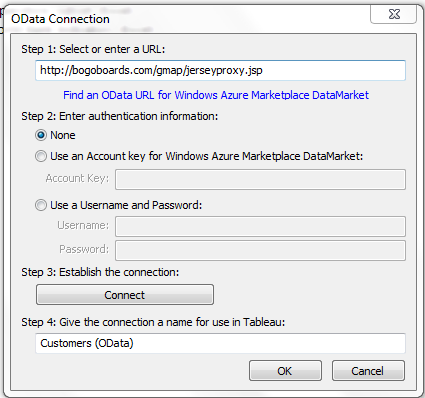

These are the two main components of the solution. The rest is more setup, less code. So now, my java app calls a BI Service that is being converted to XML and exposes it as an OData producer, so ANY client that understands OData can read it. If you like to try out this OData service, you can point your Tableau to: http://bogoboards.com/gmap/jerseyproxy.jsp

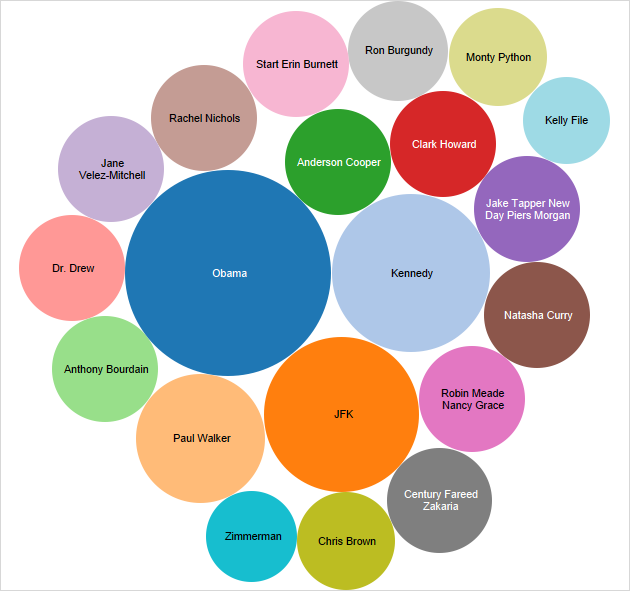

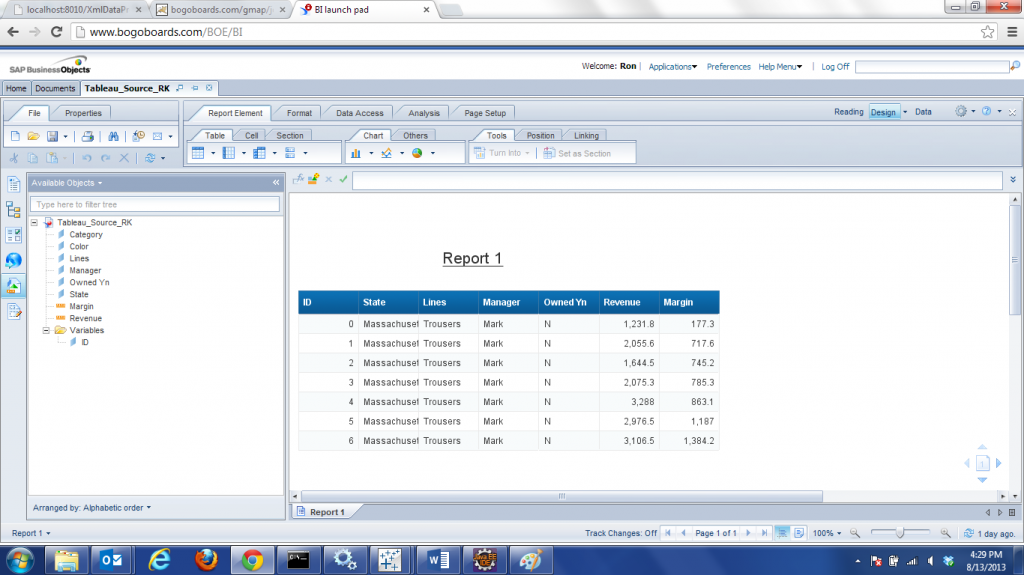

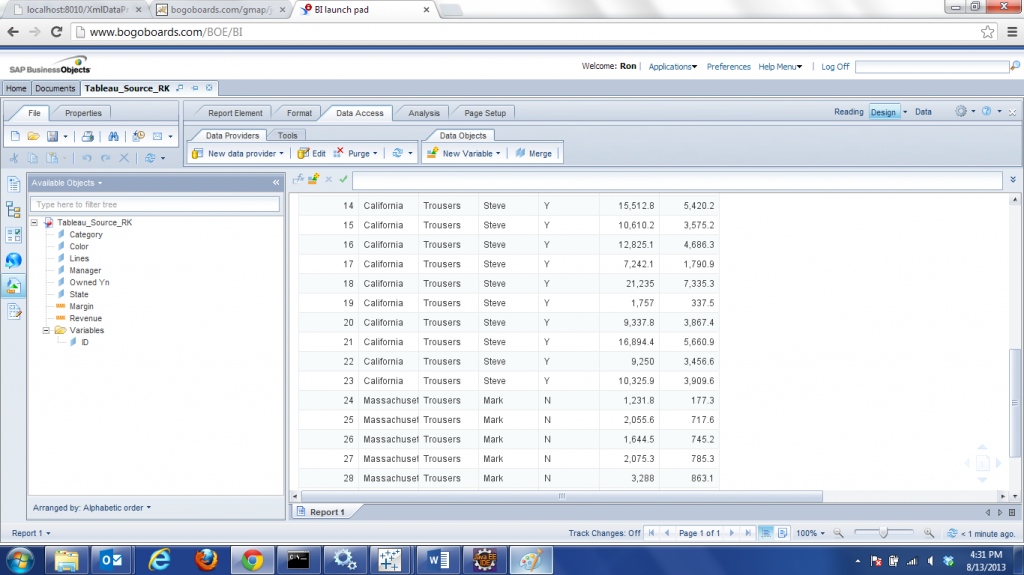

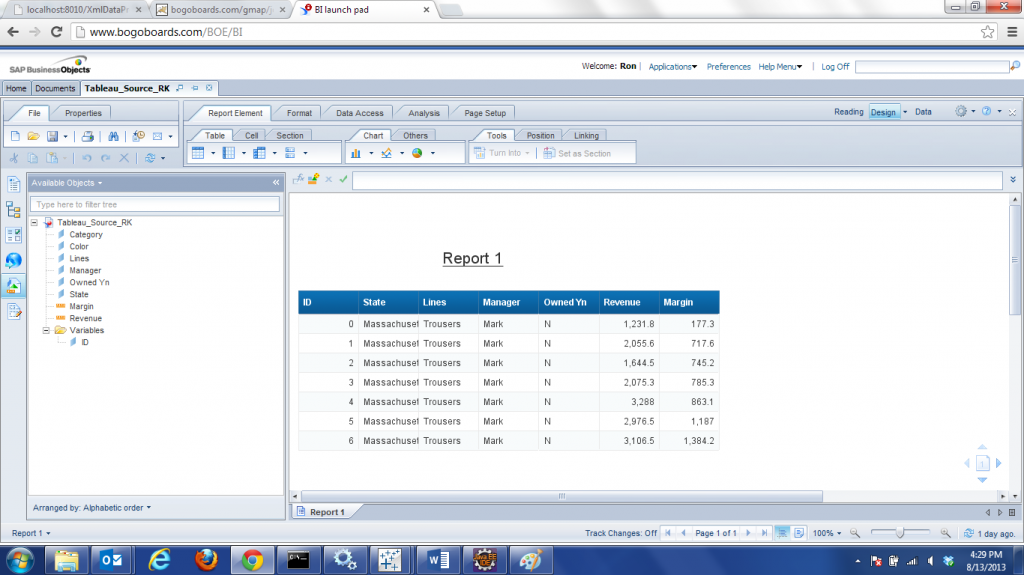

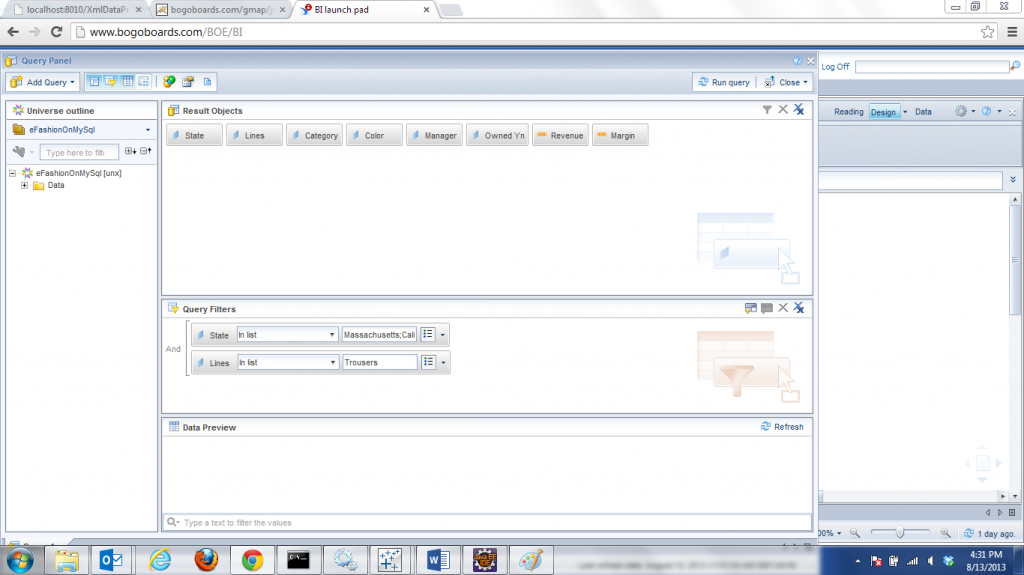

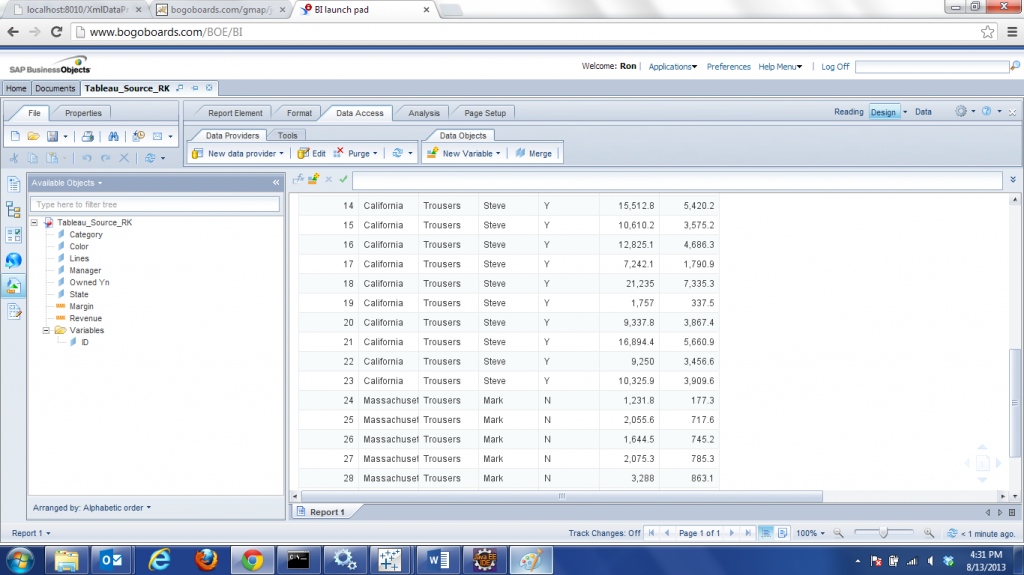

The OData producer for this example is exposed through a proxy on Tomcat. The data might look familiar, it’s a webi report based on eFashion universe, limited to a couple of states and one line of clothing, to keep things light and simple for this example…

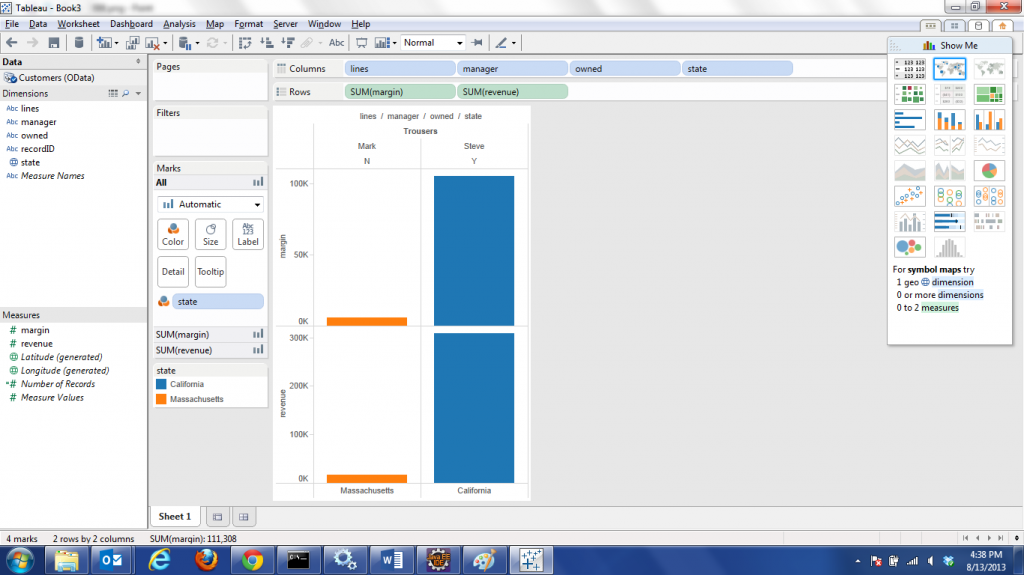

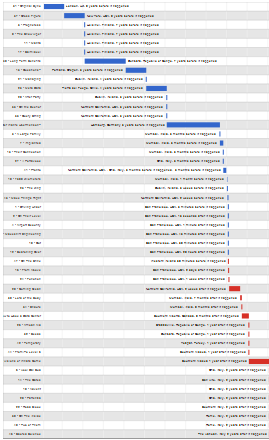

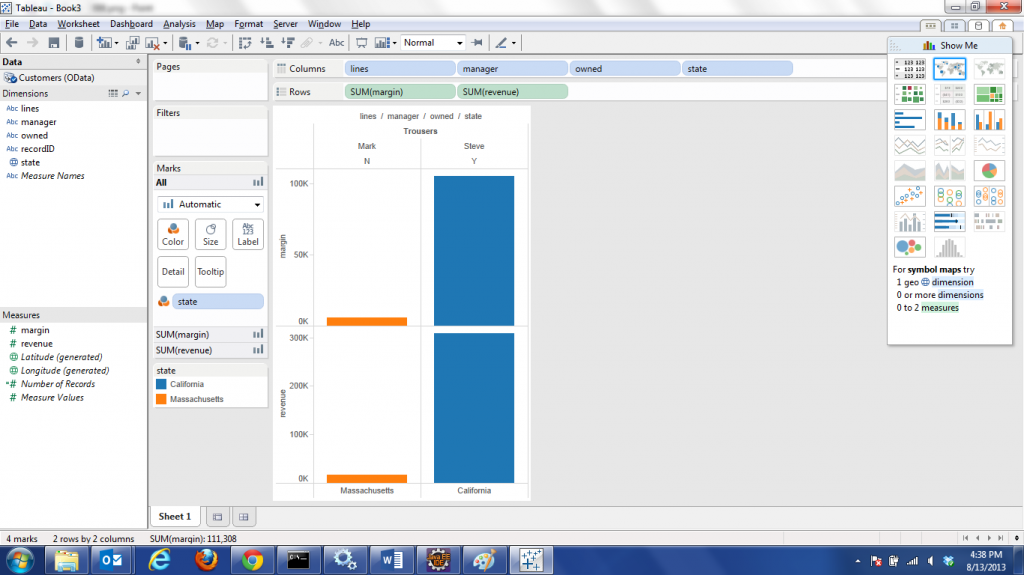

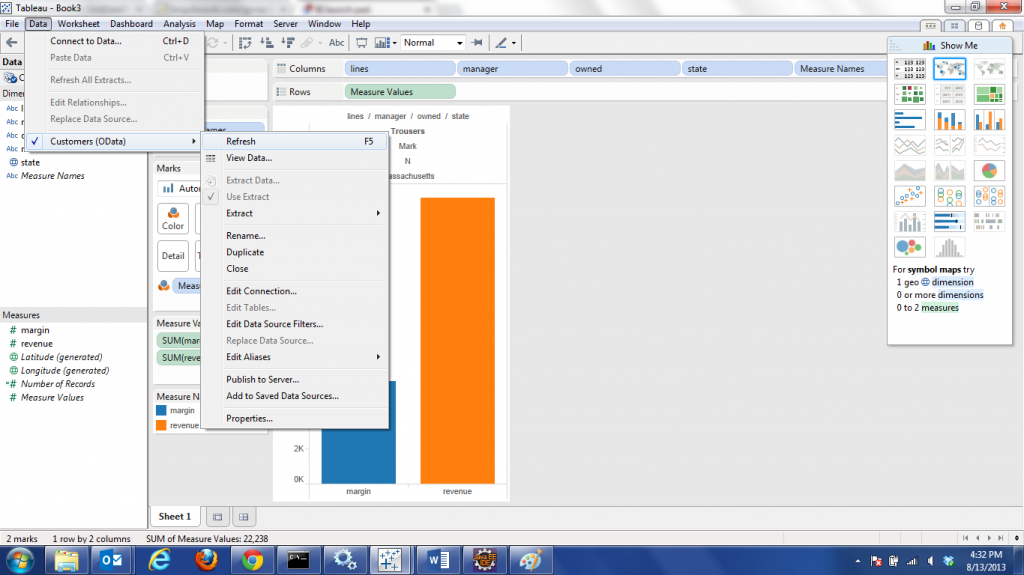

So, armed with this URL, I was now able to connect Tableau to my report based OData producer, make changes in webi to modify the data, and refresh the Tableau dashboard to automatically update the dashboard! Here are some screen shots of the process I used to test out the live refresh functionality:

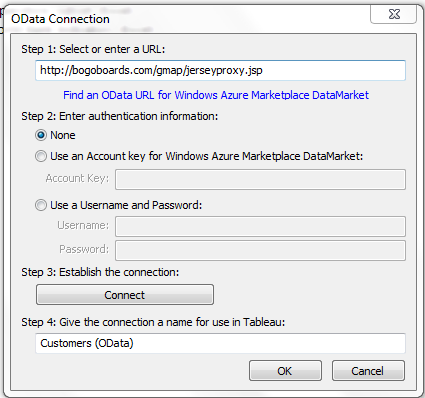

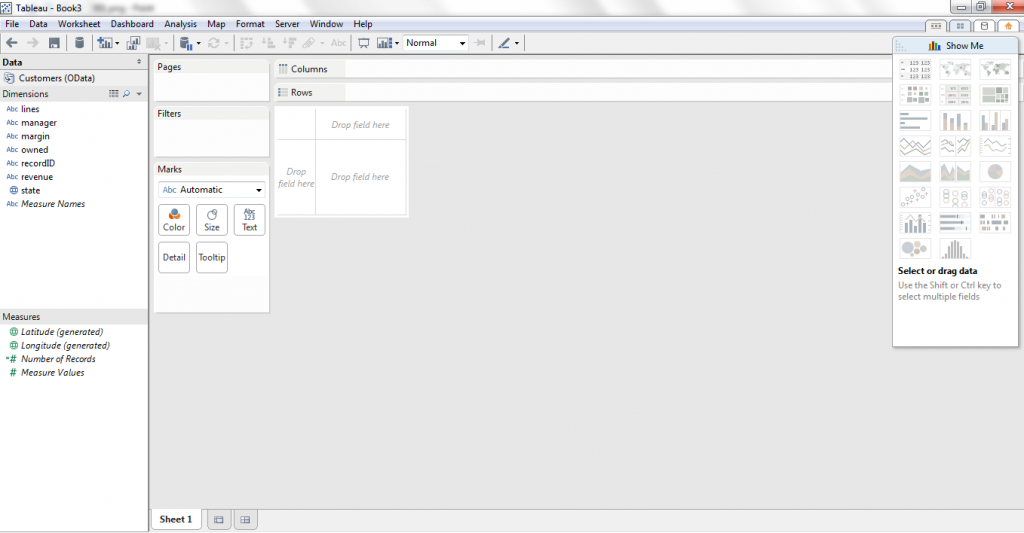

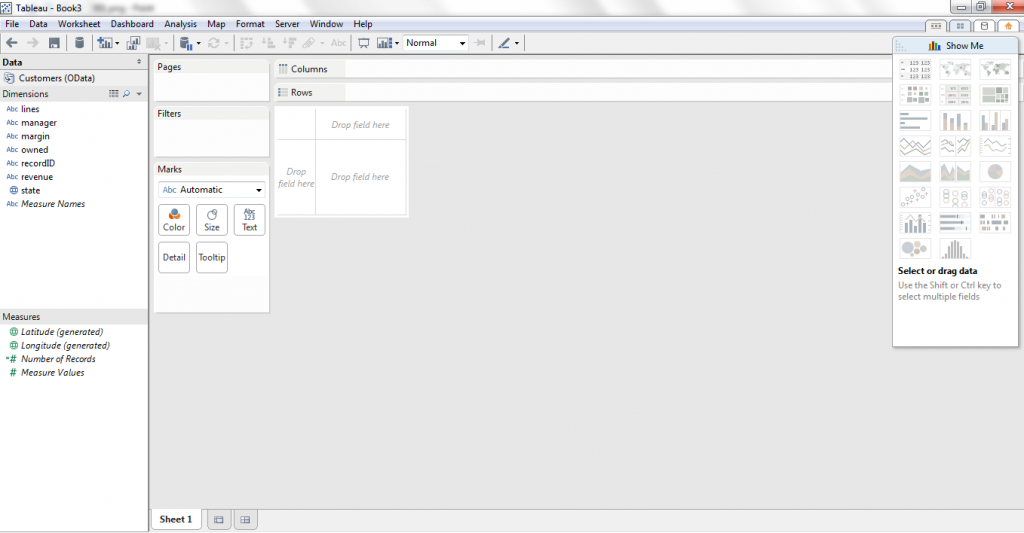

1. Connect to the Odata Producer from Tableau

2

2

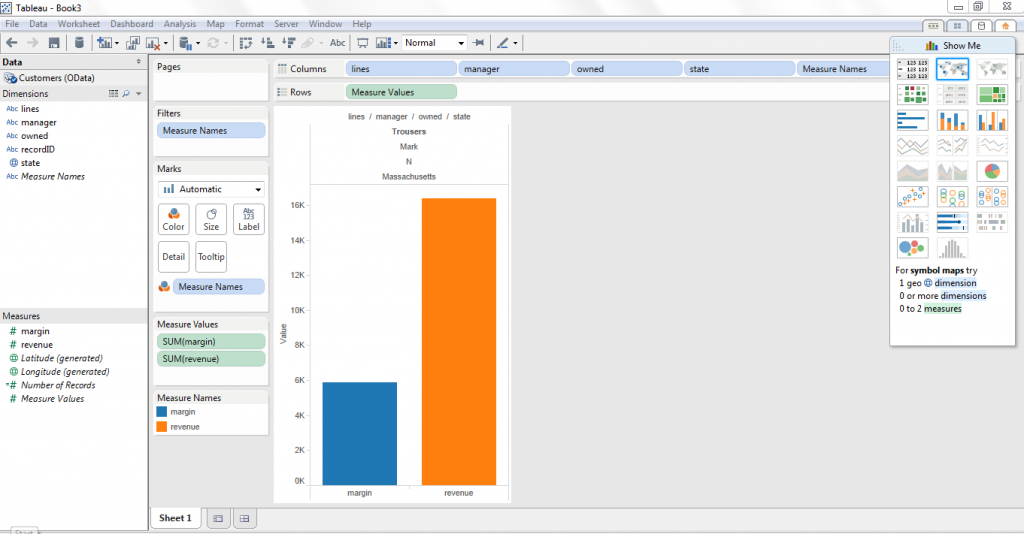

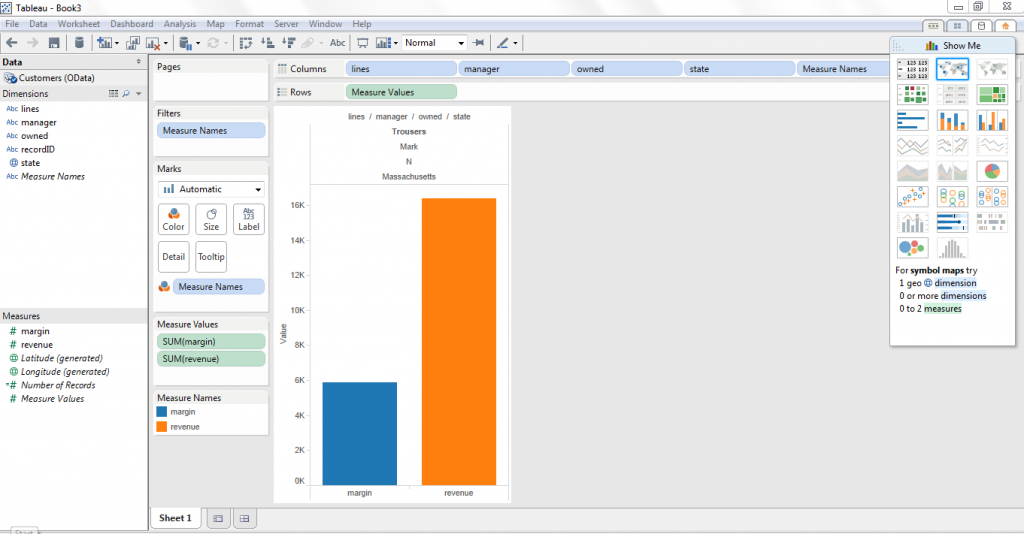

2. Data from OData producer via webi is available in Tableau to work with now

3. Setup a simple chart

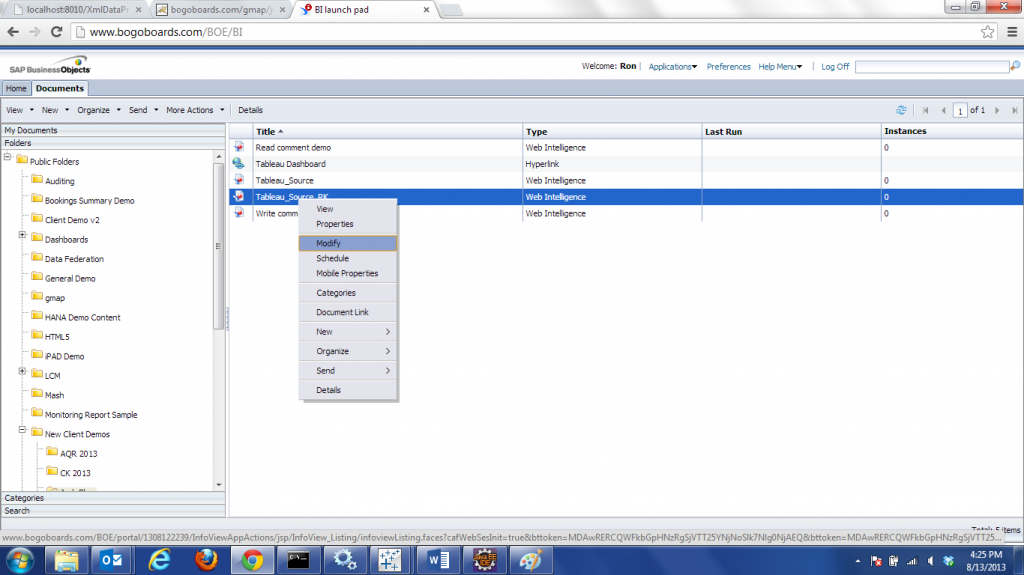

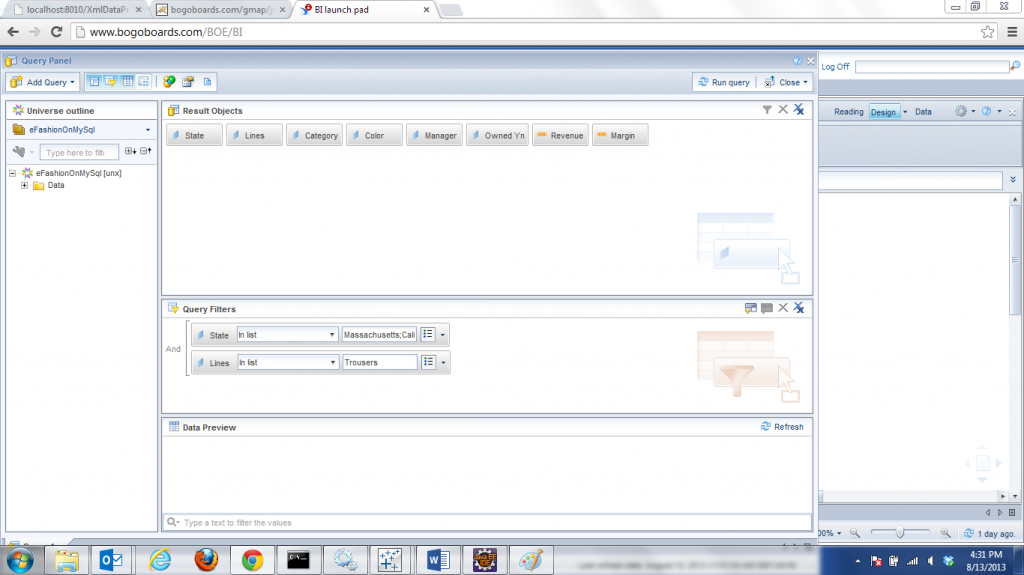

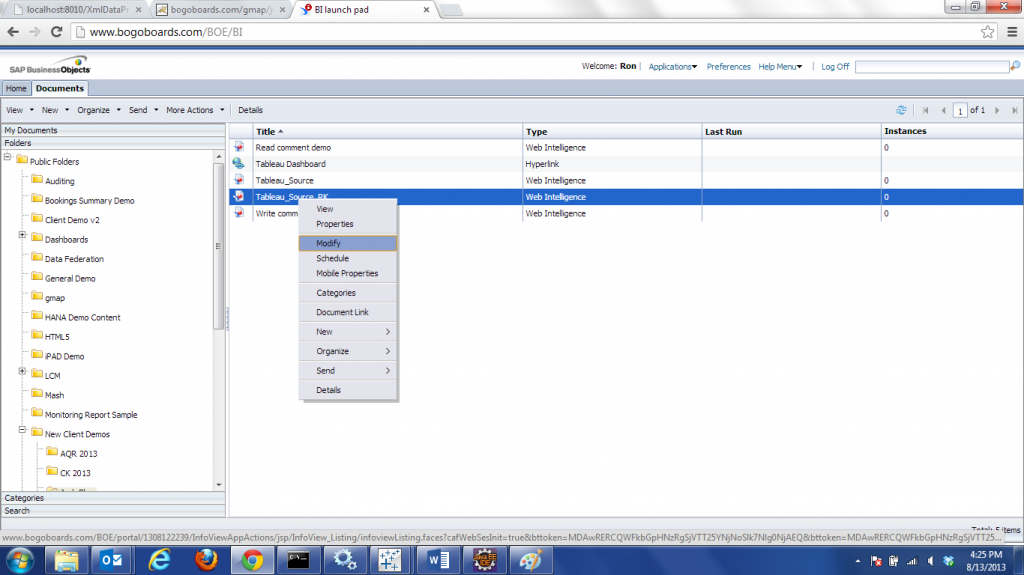

4. Now, go to the webi report that is exposed via the OData producer

5. Open the webi report

6. Modify the query to add more data

7. Save the modified webi

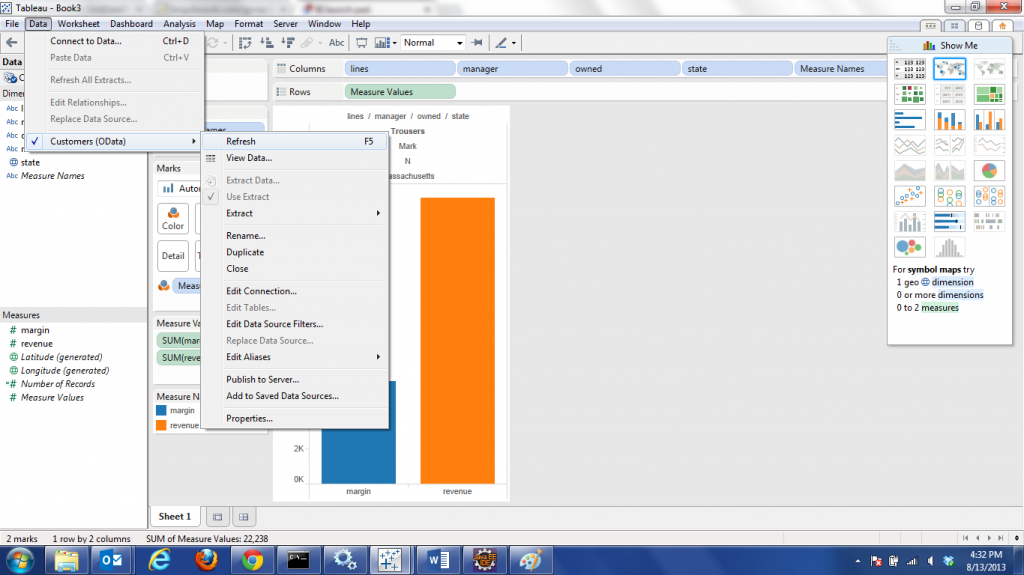

8. Now, switch back to Tableau and refresh the OData producer data source

9. Observe the new data flows through